- Lexical Analysis Program In C

- Lexical Analyser Program In Compiler Design

- Lexical Analyzer Program In C Github

Programs

A C# program consists of one or more source files, known formally as compilation units (Compilation units). A source file is an ordered sequence of Unicode characters. Source files typically have a one-to-one correspondence with files in a file system, but this correspondence is not required. For maximal portability, it is recommended that files in a file system be encoded with the UTF-8 encoding.

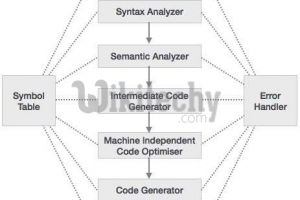

Conceptually speaking, a program is compiled using three steps:

- Transformation, which converts a file from a particular character repertoire and encoding scheme into a sequence of Unicode characters.

- Lexical analysis, which translates a stream of Unicode input characters into a stream of tokens.

- Syntactic analysis, which translates the stream of tokens into executable code.

Grammars

This specification presents the syntax of the C# programming language using two grammars. The lexical grammar (Lexical grammar) defines how Unicode characters are combined to form line terminators, white space, comments, tokens, and pre-processing directives. The syntactic grammar (Syntactic grammar) defines how the tokens resulting from the lexical grammar are combined to form C# programs.

Grammar notation

The lexical and syntactic grammars are presented in Backus-Naur form using the notation of the ANTLR grammar tool.

Lexical grammar

The lexical grammar of C# is presented in Lexical analysis, Tokens, and Pre-processing directives. The terminal symbols of the lexical grammar are the characters of the Unicode character set, and the lexical grammar specifies how characters are combined to form tokens (Tokens), white space (White space), comments (Comments), and pre-processing directives (Pre-processing directives).

Every source file in a C# program must conform to the input production of the lexical grammar (Lexical analysis).

Syntactic grammar

The syntactic grammar of C# is presented in the chapters and appendices that follow this chapter. The terminal symbols of the syntactic grammar are the tokens defined by the lexical grammar, and the syntactic grammar specifies how tokens are combined to form C# programs.

Every source file in a C# program must conform to the compilation_unit production of the syntactic grammar (Compilation units).

Lexical analysis

The input production defines the lexical structure of a C# source file. Each source file in a C# program must conform to this lexical grammar production.

Jan 17, 2016 Download Game Pc Yuri 3 Free. January 17, 2016. Game yuri 3 free download. Articles game yuri 3. PCs offer something a little different from other game experiences. Game yuri free download. Wire eg 'Yuri Gagarin.three innovative game modes, earn. Category Word. Articles game yuri. Aug 04, 2018 โหลดเกมส์ Red Alert 3 ภาษาไทย Red Alert 3!! สำหรับไฟล์นี้ เป็นตัวเต็มนะคับ เล่นได้ทั้ง 2 ภาษา ไทยและอังกฤษ เกมส์สร้างฐานรบ PC โหลดเกมส์ PC. Red Alert 3 PC Game Free Download Full Version I hope you enjoy this form of the article and will facilitate the crucial understanding of the cause of the game. If neither the text nor the graphics provide the desired information, formulate your questions in the feedback system on this text. Yuri 3 pc download. Jan 07, 2018 C&C Red Alert: Yuri’s Revenge Game, PC download, full version game, full pc game, for PC Before downloading make sure that your PC meets minimum system requirements. Minimum System Requirements OS: Windows 95/98 Processor: Pentium 2 @ 266 MHz RAM: 64 MB Hard Drive: 200 MB Free Video Memory: 8 MB Sound Card: DirectX Compatible.

Five basic elements make up the lexical structure of a C# source file: Line terminators (Line terminators), white space (White space), comments (Comments), tokens (Tokens), and pre-processing directives (Pre-processing directives). Of these basic elements, only tokens are significant in the syntactic grammar of a C# program (Syntactic grammar).

The lexical processing of a C# source file consists of reducing the file into a sequence of tokens which becomes the input to the syntactic analysis. Line terminators, white space, and comments can serve to separate tokens, and pre-processing directives can cause sections of the source file to be skipped, but otherwise these lexical elements have no impact on the syntactic structure of a C# program.

In the case of interpolated string literals (Interpolated string literals) a single token is initially produced by lexical analysis, but is broken up into several input elements which are repeatedly subjected to lexical analysis until all interpolated string literals have been resolved. The resulting tokens then serve as input to the syntactic analysis.

When several lexical grammar productions match a sequence of characters in a source file, the lexical processing always forms the longest possible lexical element. For example, the character sequence

// is processed as the beginning of a single-line comment because that lexical element is longer than a single / token.Line terminators

Line terminators divide the characters of a C# source file into lines.

For compatibility with source code editing tools that add end-of-file markers, and to enable a source file to be viewed as a sequence of properly terminated lines, the following transformations are applied, in order, to every source file in a C# program:

- If the last character of the source file is a Control-Z character (

U+001A), this character is deleted. - A carriage-return character (

U+000D) is added to the end of the source file if that source file is non-empty and if the last character of the source file is not a carriage return (U+000D), a line feed (U+000A), a line separator (U+2028), or a paragraph separator (U+2029).

Two forms of comments are supported: single-line comments and delimited comments. Single-line comments start with the characters

// and extend to the end of the source line. Delimited comments start with the characters /* and end with the characters */. Delimited comments may span multiple lines.Comments do not nest. The character sequences

/* and */ have no special meaning within a // comment, and the character sequences // and /* have no special meaning within a delimited comment.Comments are not processed within character and string literals.

The example

includes a delimited comment.

The example

shows several single-line comments.

White space

White space is defined as any character with Unicode class Zs (which includes the space character) as well as the horizontal tab character, the vertical tab character, and the form feed character.

Tokens

There are several kinds of tokens: identifiers, keywords, literals, operators, and punctuators. White space and comments are not tokens, though they act as separators for tokens.

Unicode character escape sequences

A Unicode character escape sequence represents a Unicode character. Unicode character escape sequences are processed in identifiers (Identifiers), character literals (Character literals), and regular string literals (String literals). A Unicode character escape is not processed in any other location (for example, to form an operator, punctuator, or keyword).

A Unicode escape sequence represents the single Unicode character formed by the hexadecimal number following the '

u' or 'U' characters. Since C# uses a 16-bit encoding of Unicode code points in characters and string values, a Unicode character in the range U+10000 to U+10FFFF is not permitted in a character literal and is represented using a Unicode surrogate pair in a string literal. Unicode characters with code points above 0x10FFFF are not supported.Multiple translations are not performed. For instance, the string literal '

u005Cu005C' is equivalent to 'u005C' rather than 'u005C is the character 'The example

shows several uses of

u0066, which is the escape sequence for the letter 'f'. The program is equivalent toIdentifiers

The rules for identifiers given in this section correspond exactly to those recommended by the Unicode Standard Annex 31, except that underscore is allowed as an initial character (as is traditional in the C programming language), Unicode escape sequences are permitted in identifiers, and the '

@' character is allowed as a prefix to enable keywords to be used as identifiers.For information on the Unicode character classes mentioned above, see The Unicode Standard, Version 3.0, section 4.5.

Examples of valid identifiers include '

identifier1', '_identifier2', and '@if'.An identifier in a conforming program must be in the canonical format defined by Unicode Normalization Form C, as defined by Unicode Standard Annex 15. The behavior when encountering an identifier not in Normalization Form C is implementation-defined; however, a diagnostic is not required.

The prefix '

@' enables the use of keywords as identifiers, which is useful when interfacing with other programming languages. The character @ is not actually part of the identifier, so the identifier might be seen in other languages as a normal identifier, without the prefix. An identifier with an @ prefix is called a verbatim identifier. Use of the @ prefix for identifiers that are not keywords is permitted, but strongly discouraged as a matter of style.

The example:

defines a class named '

class' with a static method named 'static' that takes a parameter named 'bool'. Note that since Unicode escapes are not permitted in keywords, the token 'clu0061ss' is an identifier, and is the same identifier as '@class'.Two identifiers are considered the same if they are identical after the following transformations are applied, in order:

- The prefix '

@', if used, is removed. - Each unicode_escape_sequence is transformed into its corresponding Unicode character.

- Any formatting_characters are removed.

Identifiers containing two consecutive underscore characters (

U+005F) are reserved for use by the implementation. For example, an implementation might provide extended keywords that begin with two underscores.Keywords

A keyword is an identifier-like sequence of characters that is reserved, and cannot be used as an identifier except when prefaced by the

@ character.In some places in the grammar, specific identifiers have special meaning, but are not keywords. Such identifiers are sometimes referred to as 'contextual keywords'. For example, within a property declaration, the '

get' and 'set' identifiers have special meaning (Accessors). An identifier other than get or set is never permitted in these locations, so this use does not conflict with a use of these words as identifiers. In other cases, such as with the identifier 'var' in implicitly typed local variable declarations (Local variable declarations), a contextual keyword can conflict with declared names. In such cases, the declared name takes precedence over the use of the identifier as a contextual keyword.Lexical Analysis Program In C

Literals

A literal is a source code representation of a value.

Boolean literals

There are two boolean literal values:

true and false.The type of a boolean_literal is

bool.Integer literals

Integer literals are used to write values of types

int, uint, long, and ulong. Integer literals have two possible forms: decimal and hexadecimal.The type of an integer literal is determined as follows:

- If the literal has no suffix, it has the first of these types in which its value can be represented:

int,uint,long,ulong. - If the literal is suffixed by

Uoru, it has the first of these types in which its value can be represented:uint,ulong. - If the literal is suffixed by

Lorl, it has the first of these types in which its value can be represented:long,ulong. - If the literal is suffixed by

UL,Ul,uL,ul,LU,Lu,lU, orlu, it is of typeulong.

If the value represented by an integer literal is outside the range of the

ulong type, a compile-time error occurs.As a matter of style, it is suggested that '

L' be used instead of 'l' when writing literals of type long, since it is easy to confuse the letter 'l' with the digit '1'.To permit the smallest possible

int and long values to be written as decimal integer literals, the following two rules exist:- When a decimal_integer_literal with the value 2147483648 (2^31) and no integer_type_suffix appears as the token immediately following a unary minus operator token (Unary minus operator), the result is a constant of type

intwith the value -2147483648 (-2^31). In all other situations, such a decimal_integer_literal is of typeuint. - When a decimal_integer_literal with the value 9223372036854775808 (2^63) and no integer_type_suffix or the integer_type_suffix

Lorlappears as the token immediately following a unary minus operator token (Unary minus operator), the result is a constant of typelongwith the value -9223372036854775808 (-2^63). In all other situations, such a decimal_integer_literal is of typeulong.

Real literals

Real literals are used to write values of types

float, double, and decimal.If no real_type_suffix is specified, the type of the real literal is

double. Otherwise, the real type suffix determines the type of the real literal, as follows:- A real literal suffixed by

Forfis of typefloat. For example, the literals1f,1.5f,1e10f, and123.456Fare all of typefloat. - A real literal suffixed by

Dordis of typedouble. For example, the literals1d,1.5d,1e10d, and123.456Dare all of typedouble. - A real literal suffixed by

Mormis of typedecimal. For example, the literals1m,1.5m,1e10m, and123.456Mare all of typedecimal. This literal is converted to adecimalvalue by taking the exact value, and, if necessary, rounding to the nearest representable value using banker's rounding (The decimal type). Any scale apparent in the literal is preserved unless the value is rounded or the value is zero (in which latter case the sign and scale will be 0). Hence, the literal2.900mwill be parsed to form the decimal with sign0, coefficient2900, and scale3.

If the specified literal cannot be represented in the indicated type, a compile-time error occurs.

The value of a real literal of type

float or double is determined by using the IEEE 'round to nearest' mode.Note that in a real literal, decimal digits are always required after the decimal point. For example,

1.3F is a real literal but 1.F is not.Character literals

A character literal represents a single character, and usually consists of a character in quotes, as in

'a'.Note: The ANTLR grammar notation makes the following confusing! In ANTLR, when you write

' it stands for a single quote '. And when you write ', ', 0, a, b, f, n, r, t, v.A character that follows a backslash character (

', ', 0, a, b, f, n, r, t, u, U, x, v. Otherwise, a compile-time error occurs.A hexadecimal escape sequence represents a single Unicode character, with the value formed by the hexadecimal number following '

x'.If the value represented by a character literal is greater than

U+FFFF, a compile-time error occurs.A Unicode character escape sequence (Unicode character escape sequences) in a character literal must be in the range

U+0000 to U+FFFF.A simple escape sequence represents a Unicode character encoding, as described in the table below.

| Escape sequence | Character name | Unicode encoding |

|---|---|---|

' | Single quote | 0x0027 |

' | Double quote | 0x0022 |

| Backslash | 0x005C |

0 | Null | 0x0000 |

a | Alert | 0x0007 |

b | Backspace | 0x0008 |

f | Form feed | 0x000C |

n | New line | 0x000A |

r | Carriage return | 0x000D |

t | Horizontal tab | 0x0009 |

v | Vertical tab | 0x000B |

The type of a character_literal is

char.String literals

C# supports two forms of string literals: regular string literals and verbatim string literals.

A regular string literal consists of zero or more characters enclosed in double quotes, as in

'hello', and may include both simple escape sequences (such as t for the tab character), and hexadecimal and Unicode escape sequences.A verbatim string literal consists of an

@ character followed by a double-quote character, zero or more characters, and a closing double-quote character. A simple example is @'hello'. In a verbatim string literal, the characters between the delimiters are interpreted verbatim, the only exception being a quote_escape_sequence. In particular, simple escape sequences, and hexadecimal and Unicode escape sequences are not processed in verbatim string literals. A verbatim string literal may span multiple lines.A character that follows a backslash character (

', ', 0, a, b, f, n, r, t, u, U, x, v. Otherwise, a compile-time error occurs.The example

shows a variety of string literals. The last string literal,

j, is a verbatim string literal that spans multiple lines. The characters between the quotation marks, including white space such as new line characters, are preserved verbatim.Since a hexadecimal escape sequence can have a variable number of hex digits, the string literal

'x123' contains a single character with hex value 123. To create a string containing the character with hex value 12 followed by the character 3, one could write 'x00123' or 'x12' + '3' instead.The type of a string_literal is

string.Each string literal does not necessarily result in a new string instance. When two or more string literals that are equivalent according to the string equality operator (String equality operators) appear in the same program, these string literals refer to the same string instance. For instance, the output produced by

is

True because the two literals refer to the same string instance.Interpolated string literals

Interpolated string literals are similar to string literals, but contain holes delimited by

{ and }, wherein expressions can occur. At runtime, the expressions are evaluated with the purpose of having their textual forms substituted into the string at the place where the hole occurs. The syntax and semantics of string interpolation are described in section (Interpolated strings).Like string literals, interpolated string literals can be either regular or verbatim. Interpolated regular string literals are delimited by

$' and ', and interpolated verbatim string literals are delimited by $@' and '.Like other literals, lexical analysis of an interpolated string literal initially results in a single token, as per the grammar below. However, before syntactic analysis, the single token of an interpolated string literal is broken into several tokens for the parts of the string enclosing the holes, and the input elements occurring in the holes are lexically analysed again. This may in turn produce more interpolated string literals to be processed, but, if lexically correct, will eventually lead to a sequence of tokens for syntactic analysis to process.

An interpolated_string_literal token is reinterpreted as multiple tokens and other input elements as follows, in order of occurrence in the interpolated_string_literal:

- Occurrences of the following are reinterpreted as separate individual tokens: the leading

$sign, interpolated_regular_string_whole, interpolated_regular_string_start, interpolated_regular_string_mid, interpolated_regular_string_end, interpolated_verbatim_string_whole, interpolated_verbatim_string_start, interpolated_verbatim_string_mid and interpolated_verbatim_string_end. - Occurrences of regular_balanced_text and verbatim_balanced_text between these are reprocessed as an input_section (Lexical analysis) and are reinterpreted as the resulting sequence of input elements. These may in turn include interpolated string literal tokens to be reinterpreted.

Syntactic analysis will recombine the tokens into an interpolated_string_expression (Interpolated strings).

Examples TODO

The null literal

The null_literal can be implicitly converted to a reference type or nullable type.

Operators and punctuators

There are several kinds of operators and punctuators. Operators are used in expressions to describe operations involving one or more operands. For example, the expression

a + b uses the + operator to add the two operands a and b. Punctuators are for grouping and separating.The vertical bar in the right_shift and right_shift_assignment productions are used to indicate that, unlike other productions in the syntactic grammar, no characters of any kind (not even whitespace) are allowed between the tokens. These productions are treated specially in order to enable the correct handling of type_parameter_lists (Type parameters).

Pre-processing directives

The pre-processing directives provide the ability to conditionally skip sections of source files, to report error and warning conditions, and to delineate distinct regions of source code. Vmware esxi upgrade. The term 'pre-processing directives' is used only for consistency with the C and C++ programming languages. In C#, there is no separate pre-processing step; pre-processing directives are processed as part of the lexical analysis phase.

The following pre-processing directives are available:

#defineand#undef, which are used to define and undefine, respectively, conditional compilation symbols (Declaration directives).#if,#elif,#else, and#endif, which are used to conditionally skip sections of source code (Conditional compilation directives).#line, which is used to control line numbers emitted for errors and warnings (Line directives).#errorand#warning, which are used to issue errors and warnings, respectively (Diagnostic directives).#regionand#endregion, which are used to explicitly mark sections of source code (Region directives).#pragma, which is used to specify optional contextual information to the compiler (Pragma directives).

A pre-processing directive always occupies a separate line of source code and always begins with a

# character and a pre-processing directive name. White space may occur before the # character and between the # character and the directive name.A source line containing a

#define, #undef, #if, #elif, #else, #endif, #line, or #endregion directive may end with a single-line comment. Delimited comments (the /* */ style of comments) are not permitted on source lines containing pre-processing directives.Pre-processing directives are not tokens and are not part of the syntactic grammar of C#. However, pre-processing directives can be used to include or exclude sequences of tokens and can in that way affect the meaning of a C# program. For example, when compiled, the program:

results in the exact same sequence of tokens as the program:

Thus, whereas lexically, the two programs are quite different, syntactically, they are identical.

Conditional compilation symbols

The conditional compilation functionality provided by the

#if, #elif, #else, and #endif directives is controlled through pre-processing expressions (Pre-processing expressions) and conditional compilation symbols.A conditional compilation symbol has two possible states: defined or undefined. At the beginning of the lexical processing of a source file, a conditional compilation symbol is undefined unless it has been explicitly defined by an external mechanism (such as a command-line compiler option). When a

#define directive is processed, the conditional compilation symbol named in that directive becomes defined in that source file. The symbol remains defined until an #undef directive for that same symbol is processed, or until the end of the source file is reached. An implication of this is that #define and #undef directives in one source file have no effect on other source files in the same program.When referenced in a pre-processing expression, a defined conditional compilation symbol has the boolean value

true, and an undefined conditional compilation symbol has the boolean value false. There is no requirement that conditional compilation symbols be explicitly declared before they are referenced in pre-processing expressions. Instead, undeclared symbols are simply undefined and thus have the value false.The name space for conditional compilation symbols is distinct and separate from all other named entities in a C# program. Conditional compilation symbols can only be referenced in

#define and #undef directives and in pre-processing expressions.Pre-processing expressions

Pre-processing expressions can occur in

#if and #elif directives. The operators !, , !=, && and || are permitted in pre-processing expressions, and parentheses may be used for grouping.When referenced in a pre-processing expression, a defined conditional compilation symbol has the boolean value

true, and an undefined conditional compilation symbol has the boolean value false.Evaluation of a pre-processing expression always yields a boolean value. The rules of evaluation for a pre-processing expression are the same as those for a constant expression (Constant expressions), except that the only user-defined entities that can be referenced are conditional compilation symbols.

Declaration directives

The declaration directives are used to define or undefine conditional compilation symbols.

The processing of a

#define directive causes the given conditional compilation symbol to become defined, starting with the source line that follows the directive. Likewise, the processing of an #undef directive causes the given conditional compilation symbol to become undefined, starting with the source line that follows the directive.Any

#define and #undef directives in a source file must occur before the first token (Tokens) in the source file; otherwise a compile-time error occurs. In intuitive terms, #define and #undef directives must precede any 'real code' in the source file.The example:

is valid because the

#define directives precede the first token (the namespace keyword) in the source file.The following example results in a compile-time error because a

#define follows real code:A

#define may define a conditional compilation symbol that is already defined, without there being any intervening #undef for that symbol. The example below defines a conditional compilation symbol A and then defines it again.A

#undef may 'undefine' a conditional compilation symbol that is not defined. The example below defines a conditional compilation symbol A and then undefines it twice; although the second #undef has no effect, it is still valid.Conditional compilation directives

The conditional compilation directives are used to conditionally include or exclude portions of a source file.

As indicated by the syntax, conditional compilation directives must be written as sets consisting of, in order, an

#if directive, zero or more #elif directives, zero or one #else directive, and an #endif directive. Between the directives are conditional sections of source code. Each section is controlled by the immediately preceding directive. A conditional section may itself contain nested conditional compilation directives provided these directives form complete sets.A pp_conditional selects at most one of the contained conditional_sections for normal lexical processing:

- The pp_expressions of the

#ifand#elifdirectives are evaluated in order until one yieldstrue. If an expression yieldstrue, the conditional_section of the corresponding directive is selected. - If all pp_expressions yield

false, and if an#elsedirective is present, the conditional_section of the#elsedirective is selected. - Otherwise, no conditional_section is selected.

The selected conditional_section, if any, is processed as a normal input_section: the source code contained in the section must adhere to the lexical grammar; tokens are generated from the source code in the section; and pre-processing directives in the section have the prescribed effects. Serious sam 3 bfe free download pc full version free.

The remaining conditional_sections, if any, are processed as skipped_sections: except for pre-processing directives, the source code in the section need not adhere to the lexical grammar; no tokens are generated from the source code in the section; and pre-processing directives in the section must be lexically correct but are not otherwise processed. Within a conditional_section that is being processed as a skipped_section, any nested conditional_sections (contained in nested

#if..#endif and #region..#endregion constructs) are also processed as skipped_sections.The following example illustrates how conditional compilation directives can nest:

Except for pre-processing directives, skipped source code is not subject to lexical analysis. For example, the following is valid despite the unterminated comment in the

#else section:Note, however, that pre-processing directives are required to be lexically correct even in skipped sections of source code.

Pre-processing directives are not processed when they appear inside multi-line input elements. For example, the program:

results in the output:

In peculiar cases, the set of pre-processing directives that is processed might depend on the evaluation of the pp_expression. The example:

always produces the same token stream (

classQ{}), regardless of whether or not X is defined. If X is defined, the only processed directives are #if and #endif, due to the multi-line comment. If X is undefined, then three directives (#if, #else, #endif) are part of the directive set.Diagnostic directives

The diagnostic directives are used to explicitly generate error and warning messages that are reported in the same way as other compile-time errors and warnings.

The example:

always produces a warning ('Code review needed before check-in'), and produces a compile-time error ('A build can't be both debug and retail') if the conditional symbols

Debug and Retail are both defined. Note that a pp_message can contain arbitrary text; specifically, it need not contain well-formed tokens, as shown by the single quote in the word can't.Region directives

The region directives are used to explicitly mark regions of source code.

No semantic meaning is attached to a region; regions are intended for use by the programmer or by automated tools to mark a section of source code. The message specified in a

#region or #endregion directive likewise has no semantic meaning; it merely serves to identify the region. Matching #region and #endregion directives may have different pp_messages.The lexical processing of a region:

corresponds exactly to the lexical processing of a conditional compilation directive of the form:

Line directives

Line directives may be used to alter the line numbers and source file names that are reported by the compiler in output such as warnings and errors, and that are used by caller info attributes (Caller info attributes).

Line directives are most commonly used in meta-programming tools that generate C# source code from some other text input.

When no

#line directives are present, the compiler reports true line numbers and source file names in its output. When processing a #line directive that includes a line_indicator that is not default, the compiler treats the line after the directive as having the given line number (and file name, if specified).A

#line default directive reverses the effect of all preceding #line directives. The compiler reports true line information for subsequent lines, precisely as if no #line directives had been processed.A

#line hidden directive has no effect on the file and line numbers reported in error messages, but does affect source level debugging. When debugging, all lines between a #line hidden directive and the subsequent #line directive (that is not #line hidden) have no line number information. When stepping through code in the debugger, these lines will be skipped entirely.Note that a file_name differs from a regular string literal in that escape characters are not processed; the '

Pragma directives

The

#pragma preprocessing directive is used to specify optional contextual information to the compiler. The information supplied in a #pragma directive will never change program semantics.C# provides

#pragma directives to control compiler warnings. Future versions of the language may include additional #pragma directives. To ensure interoperability with other C# compilers, the Microsoft C# compiler does not issue compilation errors for unknown #pragma directives; such directives do however generate warnings.Pragma warning

The

#pragma warning directive is used to disable or restore all or a particular set of warning messages during compilation of the subsequent program text.A

#pragma warning directive that omits the warning list affects all warnings. A #pragma warning directive the includes a warning list affects only those warnings that are specified in the list.A

#pragma warning disable directive disables all or the given set of warnings.A

#pragma warning restore directive restores all or the given set of warnings to the state that was in effect at the beginning of the compilation unit. Note that if a particular warning was disabled externally, a #pragma warning restore (whether for all or the specific warning) will not re-enable that warning.The following example shows use of

#pragma warning to temporarily disable the warning reported when obsoleted members are referenced, using the warning number from the Microsoft C# compiler.NOTE : I'm using C++14 flag to compile.. I am trying to create a very simple lexer in C++. I am using regular expressions to identify different tokens . My program is able to identify the tokens and display them. BUT THE out is of the form

I want the output to be in the form

I am not able to achieve the above output.

Lexical Analyser Program In Compiler Design

Here is my program:

what is the most optimal way of doing it and also maintain the order of tokens as they appear in the source program?

Brian Tompsett - 汤莱恩4,3821414 gold badges4040 silver badges107107 bronze badges

kshitij singhkshitij singh13811 gold badge55 silver badges1515 bronze badges

2 Answers

This is a quick and dirty solution iterating on each pattern, and for each pattern trying to match the entire string, then iterating over matches and storing each match with its position in a map. The map implicitly sorts the matches by key (position) for you, so then you just need to iterate the map to get the matches in positional order, regardless of their pattern name.

Output:

SheljohnSheljohn4,17622 gold badges2626 silver badges5656 bronze badges

I managed to do this with only one iteration over the parsed string. All you have to do is add parentheses around regex for each token type, then you'll be able to access the strings of these submatches. If you get a non-empty string for a submatch, that means it was matched. You know the index of the submatch and therefore the index in

v.std::regex re(reg, std::regex::extended); is for matching for the longest string which is necessary for a lexical analyzer. Otherwise it might identify while1213 as while and number 1213 and depends on the order you define for the regex.16.9k66 gold badges4040 silver badges6161 bronze badges

Not the answer you're looking for? Browse other questions tagged c++regextokenlexical-analysis or ask your own question.

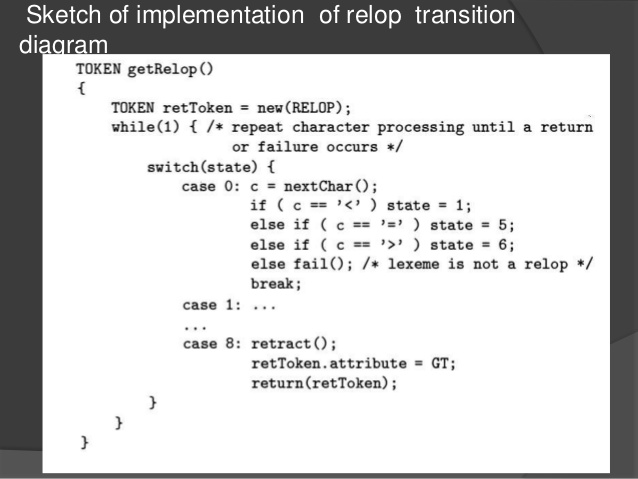

Describe how lexical analyzer generators are used to translate regular expressions into deterministic finite automata?

Lexical analyzer generators translate regular expressions (the lexical analyzer definition) into finite automata (the lexical analyzer). For example, a lexical analyzer definition may specify a number of regular expressions describing different lexical forms (integer, string, identifier, comment, etc.). The lexical analyzer generator would then translate that definition into a program module that can use the deterministic finite automata to analyze text and split it into lexemes (tokens).

What is lexical analysis?

A compiler is usually divided into different phases. The input to the compiler is the source program and the output is a target program. Lexical analyzer is the first phase of a compiler which gets source program as input. It scans the source program from left to right and produces tokens as output. A token can be seen as a sequence of characters having a collective meaning. Lexical analyzer also called by names like scanner… Read More

What are the functions of lexical analyzer?

The lexical analyzer function, named after rule declarations, recognizes tokens from the input stream and returns them to the parser.

1. What are three reasons why syntax analyzers are based on grammars?

Simplicity-Techniques for lexical analysis are less complex than those required for syntax analysis, so the lexical-analysis process can be sim- pler if it is separate. Also, removing the low-level details of lexical analy- sis from the syntax analyzer makes the syntax analyzer both smaller and less complex. Efficiency-Although it pays to optimize the lexical analyzer, because lexical analysis requires a significant portion of total compilation time, it is not fruitful to optimize the syntax analyzer… Read More

Why lexical analyzer known as scanner?

lexical analysis involves scanning the program to be compiled and recognizing the tokens that make up the source statements .Scanners are usually designed to recognize keywords ,operators,and identifiers as well as integers,floating point numbers,character strings etc...so they are known as scanners

What is the application of regular expression in compiler construction?

Regular Expression is another way of implementing a lexical analyzer or scanner.

The language like 'c' allows the use of same name for two different variables Is it possible for lexical analyser to distinguish between such variables?

Using the same name for two different variables in languages like C is possible only within two different scopes, such as outside a block and inside a block.. int somevariable; /* outside scope */ { .. int somevariable; /* inside scope */ } In order for a lexical analyzer to distinguish those two variables, it must be able to parse the program and resolve scope the same way the compiler does.

What is ETAP?

What are the possible error recovery actions in lexical Analyzer?

• Not too many types of lexical errors - Illegal character - Ill-formed constant • How is it handled? - Discard and print a message - BUT: • If a character in the middle of a lexeme is wrong, do you discard the char or the whole lexeme? • Try to correct

What is the definition of Megan?

'MEtaGenome ANalyzer' is a computer program that analyses DNA and RNA.

What are the lexical elements of the C language?

Lexical elements are groups of characters that may legally appear in a source file. Common categories for lexical elements are keywords, numeric and alphanumeric constants, variable references and special tokens, but the exact categorization is subject to the compiler's implementation.

What is lex in system software and assembly language programming subject in M.C.A?

M.C.A could be almost anything, maybe a name of a school or school department? Lex (short for Lexical Analyzer)`is a specialized programming language that takes a set of specifications for how to scan text and find specific items in the text and generates C language source code that is compiled with other code to actually perform the work. The code generated can be used as part of a compilers lexical scan, scanning for specific words… Read More

Example of lexical clues?

What is a sentence for lexical?

His lexical skills were far better than anyone in the company. This is an example of word for lexical. The instructor defended throwing a book at me to wake me up by saying that he was using a lexical approach.

What is lexical awareness?

Lexical awareness = knowledge of vocabulary (word meanings)

What is a lexical verb?

A lexical verb is simply the main verb in a sentence.

What is the difference between Dry chemistry analyzer and Chemistry analyzer?

The difference between dry chemistry analyzer and the chemistry analyzer is the reagents used.

What are importance of auxiliary and lexical verbs in sentence?

The importance of a lexical verb in a sentence is that it is the main verb of the sentence. An auxiliary verb is there to define the lexical verb.

What is source program?

A source program is a set of one or more tokens arranged in a certain lexical order with a certain meaning that represents an algorithm or computer program. For instance.. #include <iostream> using namespace std; int main (int argc, char ** argv) { cout << 'Hello C++ World!' << endl; for (int i = 0; i < argc; ++i) { cout << 'argv[' << i << <]: ' << argv[i] << endl; } return 0… Read More

What is the definition of the word Lexical?

Lexical refers to something to do with language, words and vocabulary. It can also refer to a way of teaching a new or foreign language, the Lexical approach.

What is lexical impossibility in deconstruction literature?

It is when deconstructing literature becomes so diverse that it is coined 'lexical impossibility'. It is when deconstructing literature becomes so diverse that it is coined 'lexical impossibility'.

How is spectrum analyzer operated?

Importance of lexical verb in sentences?

A lexical verb is the main verb of the sentence. All verbs include a lexical verb. A lexical verb does not require an auxiliary verb, but an auxiliary verb exists only to help a lexical verb. It cannot exist alone. A lexical verb is a verb that provides information. The opposite of lexical verbs are auxiliary verbs, which provide grammatical structure. Lexical verbs are an open class type of verb and are used to express… Read More

What was the non-electronic differential analyzer?

What is the purpose of the Microsoft baseline security analyzer?

The Microsoft Baseline Security Analyzer is a program that attempts to assess some aspects of the security of an individual computer. It does this by checking two things: whether security updates released by Microsoft have been applied, and whether certain less-secure security settings are present. The security settings are assessed from a fixed list of registry and program checks.

When was Bible Analyzer created?

What is lexical inconsistency?

Lexical refers to the 'lexicon' or the kinds of words specific to a certain specialty or field. Think of it as slang or jargon, if you have a lexical inconsistency, the term you use in one specialty doesn't translate to other disciplines.

Will a C compiler compile a C plus plus program?

No. By definition, a C++ program is a program that can only be compiled by the C++ compiler. Although the two languages do have much in common, not all C programs are valid in C++ but no C++ program can ever be valid in C. That is, if a C++ program can be compiled by a C compiler then it was never a C++ program to begin with; it was just a C program.

What is the path for the report file created when you run Windows XP readiness analyzer?

What is the lexical similarity percentage between English and Dutch?

Lexical similarity percentages vary dramatically based on who is doing the study and what words are being compared. But many studies show that Dutch has at least a 60% lexical similarity to English.

What is an electrolyte analyzer?

A electrolyte analyzer is a piece of laboratory equipment that checks electrolyte levels.

Where online can one join AOL Fantasy Football?

To join AOL Fantasy Football, check the AOL page. AOL offers a draft analyzer, line-up analyzer, trade analyzer, and team analyzer to AOL members or those who register.

What do you understand by the term operator in C language?

An operator is a lexical token that identifies an operation to be performed on one, two, or three operands.

What is lexical and auxiliary?

Lexical verbs express action or state -- run, walk, feel, love auxiliary verbs accompany a lexical/main verb to show tense or voice etc -- have run, had walked, has loved, was felt. Some verbs can be a lexical verb or an auxiliary verb eg have main verb -- I have a new car auxiliary verb - I have eaten my lunch.

How different between the meaning of the word analyzer and analyst?

The difference between the meaning of the words analyzer and analyst is that an analyzer is typically a machine whereas an analyst is typically a human being.

How do you run call of duty 4 with 3D analyzer?

yes u can run it just install 3d analyzer to ur pc then just run t and select ur .exe file and click on the option needed and just run that program....... k for any other quetion email me at [email protected]

What is the utility programs for disk space analyzer provided by ubuntu?

What is meant by c program?

A C program is a computer program written using the C programming language.

C program to an executable program?

To make a C program an executable program, you run it through a compiler.

Lexical Analyzer Program In C Github

What is compile time error in C programming?

A compile time error, also known as a syntax error, is an error detected by the compiler due to a failure to comply with the rules of syntax or lexical constructs for the language. This is different than a logic error, which is not detected by the compiler, but nevertheless causes the program to not run correctly.

How does a person run a computer analysis?

Running a computer analysis requires downloading a free program such as System Analyzer. This program will look for increasing speed, disk space, system security, and many more things to get your computer in good running condition.

Difference between simulator and logic analyzer?

simulators and analyzer are used in devices calibration simulator simulates what the device measures , i.e device measures analyzer measures what the device measures , i.e analyzer measures Eng. Mohammed Sameer Biomedical Engineer [email protected]

C program was introduced in the year?

c program was introduced in the year 1972 by Dennis Ritchie No, it was the C language, not the C program.

Relevez les mots appartenant au chant lexical de la politique.?

Raise the words belonging to the lexical song of the political one

What is a lexical register?

A lexical register is related to the temper and pitch of a voice. People use different registers or levels of voice depending on the person addressed.

Features of c program?

When object file is created in c program?

In C program: never. From C source program: during the compilation.

Digital fourier analyzer?

digital fourier analyzer analyses the signals in the form of fast fourier transform.

What is Advantage of logic analyzer with built in digital pattern generator over simulator?

Who can use a particle size analyzer?

A particle size analyzer should be used by anyone who have read the manuals before going on. The most safe particle size analyzer is laser diffraction particle size analyzer. Even though it works with laser, the power is in mW, no harm to eyes.